If you’ve spent any time on the Linux command line, you’ve probably seen awk show up in tutorials and Stack Overflow answers. It looks a bit cryptic at first. But once you get the hang of it, it becomes one of those commands you reach for all the time.

awk is a text processing tool. It reads input line by line, splits each line into fields, and lets you do something with those fields. That’s the whole idea. The power comes from how flexible that “do something” part turns out to be.

A Simple awk Example

Let’s start with a file. Say you have a file called servers.txt that looks like this:

web01 192.168.1.10 running web02 192.168.1.11 stopped db01 192.168.1.20 running

To print just the first column (the server names), you’d run:

awk '{ print $1 }' servers.txt

Output:

web01 web02 db01

That $1 means “the first field”. $2 would give you the IP addresses, $3 the status. Simple.

How awk Splits Fields

By default, awk treats any whitespace (spaces or tabs) as the delimiter between fields. So in the example above, it splits each line on the spaces and numbers the pieces starting from $1.

There’s also a special variable $0 which means the entire line. So print $0 prints the whole line. Not very exciting on its own, but useful when you’re combining it with other things.

Changing the Field Separator

Whitespace isn’t always your delimiter. A very common use case is parsing CSV files or colon-separated files like /etc/passwd.

You can set a custom field separator with the -F option:

awk -F: '{ print $1 }' /etc/passwd

This splits each line on : and prints the first field, which in /etc/passwd is the username. Try it on your own system. It’s a satisfying little command.

You can use any character as the separator. For a CSV file:

awk -F, '{ print $2 }' data.csv

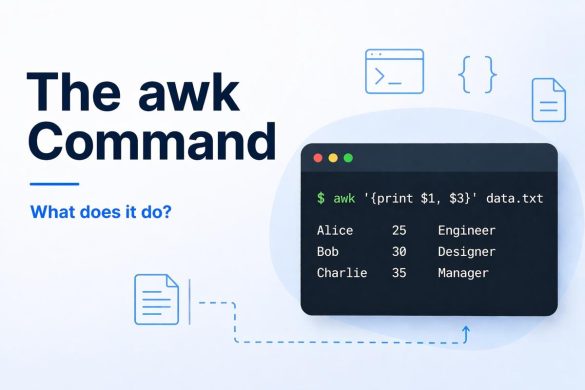

Printing Multiple Fields

You’re not limited to printing one field at a time. Just list them separated by a comma:

awk '{ print $1, $3 }' servers.txt

Output:

web01 running web02 stopped db01 running

The comma puts a space between the fields in the output. If you want a different separator, you can use the output field separator variable OFS:

awk 'BEGIN { OFS="," } { print $1, $3 }' servers.txt

Output:

web01,running web02,stopped db01,running

That BEGIN block runs once before awk processes any lines. It’s handy for setting variables upfront.

Filtering Lines with a Pattern

One of the things that makes awk really useful is that you can filter which lines get processed. You put a pattern before the action block, and awk only runs the action on lines that match.

Going back to our servers.txt, to print only the lines where the status is “running”:

awk '$3 == "running" { print $1 }' servers.txt

Output:

web01 db01

You can also use regular expressions as the pattern. To find any line containing “web”:

awk '/web/ { print $0 }' servers.txt

This works a lot like grep, but you can combine the filtering with field extraction in one go.

Using awk to Do Math

awk can handle arithmetic, which makes it great for quick calculations on tabular data. Say you have a file with some numbers:

item1 10 2.50 item2 5 4.00 item3 8 1.75

To calculate the total cost per item (quantity × price):

awk '{ print $1, $2 * $3 }' items.txt

Output:

item1 25 item2 20 item3 14

You can also accumulate a running total using a variable:

awk '{ total += $2 * $3 } END { print "Total:", total }' items.txt

Output:

Total: 59

Notice the END block here. It’s the opposite of BEGIN: it runs once after all lines have been processed.

Counting Lines That Match

Want to count how many servers are running?

awk '$3 == "running" { count++ } END { print count }' servers.txt

Output:

2

The count++ just increments a counter each time a matching line is found.

Piping Output into awk

You don’t have to work with files. awk is perfectly happy reading from a pipe, which is where it really shines in day-to-day shell use.

For example, to list all running processes and show just the process name and PID:

ps aux | awk '{ print $1, $2 }'

Or to check disk usage and flag anything over 80%:

df -h | awk '$5 > "80%" { print $6, "is getting full:", $5 }'

Combining awk with other commands like ps, df, netstat, or cat is where it starts to feel like a proper sysadmin tool.

A Quick Reference

Here are the most useful awk building blocks in one place:

| What you want | How to do it |

|---|---|

| Print field N | { print $N } |

| Print whole line | { print $0 } |

| Custom delimiter | -F"," or -F: |

| Filter by field value | $N == "value" { ... } |

| Filter by pattern | /pattern/ { ... } |

| Run before processing | BEGIN { ... } |

| Run after processing | END { ... } |

| Arithmetic | $2 * $3, total += $2 |

awk has a lot more depth to it. You can write multi-line programs, define functions, and handle some surprisingly complex data transformations. But the examples above cover the 90% you’ll actually use day to day. Start with those and build from there.

If you found this useful, check out our guides on grep and sed. The three of them together cover most of what you’ll ever need for text processing on the command line.